A while back, Kubernetes announced that it was deprecating Docker. Actually, it was deprecating something called dockershim, and Docker alongside it. Roughly one year after the announcement, Docker was completely removed from Kubernetes.

In this blog post, I'll explain why this happened and the impact it's expected to have on Docker container orchestration.

Overview

We know that Kubernetes is a container orchestrator. Its job is to pull in container images, start them, stop them, move them around from broken servers to healthy servers, and so on.

So, with Docker removed from Kubernetes, how will we run containers? And can we even run our old Docker containers anymore? Or do we need to switch to another container format and build them with another application? Do we need to adapt our containers to this change?

Let's see the answer to all these questions.

Kubernetes Dropping Docker: What Is Happening?

Here are the short answers to the above questions:

- Docker is not the only thing that can run containers. With Docker removed, most Kubernetes administrators will migrate to something called containerd. They can choose other applications, such as CRI-O, but containerd is usually the common migration path. We will see how this change helps Kubernetes run containers in a much simpler and more efficient way. In fact, stand-alone Docker already uses containerd under the hood to run containers. Confusing?

You can check out this blog post where we cleared up this mystery about Docker vs. Containerd. In fact, if you read that, it will make the entire subject here much easier to understand. You will see what containerd is, how it works, and why it makes much more sense for Kubernetes to use that instead of Docker.

- "Docker containers" will still work smoothly under Kubernetes. In fact, so-called Docker containers are not really Docker-specific. They follow a standard created by the OCI organization: Open Container Initiative. This standard defines how containers should be built and how they should be packaged so that any tool can work with them, no matter if it's Docker or something else.

- We don't need to modify our current containers. They run the same under Docker, containerd, or whatever kind of container engine we use. We say "container engine" loosely here as the technical term for this is actually container runtime. This runtime is responsible for most things revolving around containers. It is responsible for downloading and uploading images, starting and stopping containers, configuring networking for them, and so on.

Try the Kubernetes Deployments Lab for free.

What Kind of Containers Will Be Disrupted When Kubernetes Drops Docker Support? Edge Cases

Ok, we said that the containers we have don't need to be modified or adapted in any way for this transition from Docker to containerd. But we should make a disclaimer. This will usually be the case for almost everyone. But, as with anything in life, there can be exceptions.

For example, let's say we modified our containers to talk to Docker directly and send it commands such as "docker start" or "docker stop." Now, this is not standard procedure. Usually, Docker is the one controlling the life of containers, being the boss; it tells them what to do. Containers live their life in an isolated box, doing stuff internally and not bothering Docker with anything. But if we have some special requirements, we can also go in the reverse direction, making containers tell Docker what to do. In a way, a container contacts the "external world." It essentially breaks out of its little box. Then it reaches Docker and tells it: "Hey, can you do this thing for me?". For edge cases like this, some work will be necessary to adapt the containers to speak to containerd instead of Docker.

If you're interested in other edge cases that might require you to adapt your containers, you can check this link from the Kubernetes website: Finding if your app has dependencies on Docker. As mentioned, if you use standard container images and did not make any out-of-the-ordinary, non-standard modifications, chances are you don't need to do anything to transition from Docker to containerd.

What Was Dockershim? Why Is Kubernetes Dropping Docker Support?

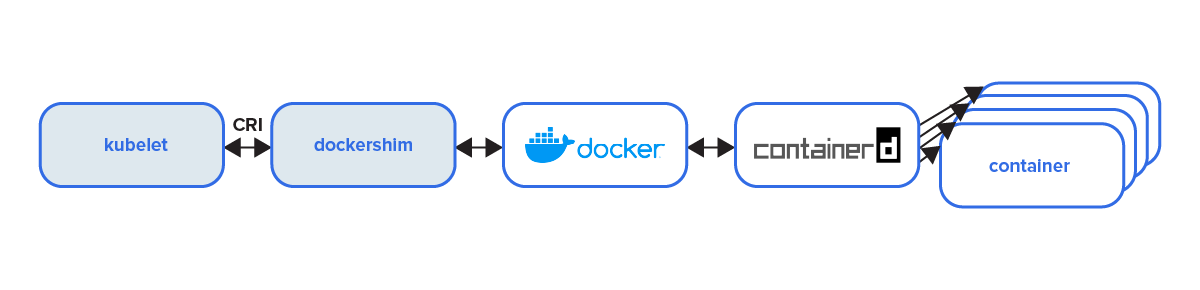

When Kubernetes was still young, let's call it the "old Docker era," the architecture that dealt with containers looked something like this:

Let's find an easy way to understand how it worked.

First of all, Kubernetes has a lot of components under the hood. One of them is kubelet. This is just a program, a so-called "node agent." Imagine we have 20 worker nodes in our Kubernetes cluster. These are simply 20 different servers, specialized to work with Kubernetes and host containers.

Each server will have this kubelet application running on it. kubelet allows these servers to receive instructions about what they need to do. So at one point, they will receive instructions to run some containers. But kubelet cannot run containers on its own. However, it can pass this instruction further along.

Now, let's think about what actually needs to happen. kubelet has to somehow tell Docker, "Hey buddy, could you download this container image and then start the container?" But there is a problem. Each application speaks its own language. We, as human beings, talk to Docker by issuing commands such as "docker start," "docker stop," "docker pull," and so on.

But kubelet does not speak this language. Most programs communicate with each other through so-called APIs, Application Programming Interfaces. And kubelet has a certain API, Docker has another. It's basically like one speaks Chinese, and the other speaks English. The "Chinese" language that kubelet speaks is specific to what is called the CRI, the Container Runtime Interface. We will see what this is at the end of our discussion. So that's why dockershim was needed. It acts as a translator, sitting between kubelet and Docker. Here's the diagram again to visualize this better:

Now, let's summarize. With Docker included in Kubernetes, this is what happens:

- kubelet receives some instructions, and it knows it has to start some container

- It sends this instruction to dockershim, in a language that dockershim was programmed to understand.

- dockershim receives this instruction: "Start this container which has the following properties." Next, it can translate that message into a language that Docker can understand.

or start container images - Now that Docker received the translated message from dockershim, it knows what to do and can work its magic. But, actually, Docker itself does not download or start container images. It has a component inside that takes care of these things. So, Docker uses a container runtime under the hood called containerd. We explained this in detail in the Docker vs. containerd article mentioned earlier.

- So, finally, after containerd receives instructions from Docker, it can now download the container image, prepare it however it is required, and finish the job by starting a container.

We can see that these are quite a lot of steps. kubelet sends a message to dockershim. dockershim translates it. dockershim sends it to Docker. Docker sends it to containerd. So many messages are sent from application to application until something can finally happen. One big reason Kubernetes developers wanted to remove Docker is that there are too many intermediaries. There's too much going on, too many steps to start a container. So, how could this be simplified? Well, check this out!

Kubernetes Docker Deprecation Makes Everything Simpler

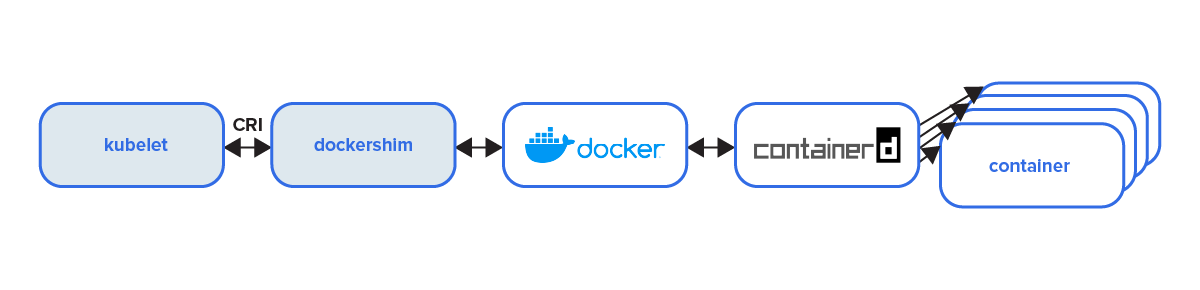

The image above shows how things work after Kubernetes removed dockershim and Docker. It is now a clean and simple process. And we can see the reason for this change is quite straightforward.

We mentioned that Docker does not actually download container images or start containers. It has this component inside, called Containerd, that does those things. So Kubernetes developers said, "Why use this heavy thing called Docker, with its many components? Why go through dockershim, then Docker, until we finally reach Containerd? Let's just use what we need. Containerd is the one doing the actual job, so let's go from kubelet straight to Containerd."

That's exactly what they achieved by removing dockershim and Docker. They extracted this container runtime called containerd and plugged it into Kubernetes. So now, things are much simpler:

- When kubelet wants to start a container, it speaks to Containerd directly.

- Of course, kubelet and containerd still don't speak the same language. However, containerd includes a mini-translator, a plugin called CRI plugin, that does this translation internally.

So now, with fewer components and fewer translation steps, containers can start so much faster. Imagine if we could start one container 0.1 seconds faster. It might not seem like a lot, but when we start hundreds of containers, that time adds up, and we can improve performance noticeably.

What's the Deal with CRI? Why Is It Needed?

But a mystery still remains. We could ask ourselves. Hey, why doesn't kubelet know how to talk to Docker or containerd directly? Why don't they have compatible APIs? Surely, the developers could have implemented this into kubelet and taught it to speak to Docker or containerd. Well, this is by design. kubelet is adapted for the Container Runtime Interface.

To make this easy to understand, consider the following:

We can plug anything we want into a USB port on our laptops. So, if we buy a memory stick, we don't need to worry about compatibility. The laptop can implement its internal logic. The memory stick can also choose its own logic. However, the USB port makes them compatible, even if each one's internal logic is different.

We can connect a memory stick from Kingston, but also one from Verbatim, or ADATA. Even though they most likely are very different inside. This way, laptops are free to change their internal logic however they want, from model to model. Memory sticks can also choose whatever logic they want. So, each can choose their own roads to go on, but the USB port will always keep them compatible with each other.

This interface, the middle point between the laptop and the memory stick, remains constant. So the laptop knows what to expect when something is plugged in. It knows that the electronic circuit of the device follows a certain format. And the memory stick knows what to expect when connecting to a laptop. From its point of view, the electronic circuit is also clearly known.

As the CRI name suggests, the Container Runtime Interface is also, well… an interface. And just like the USB port, it allows things to connect with each other. It's a middle point that keeps things compatible. With it, kubelet can change its internal logic however it wants.

Container runtimes can also choose whatever internal logic they want. The CRI, as a middle point, allows kubelet to connect with any container runtime that is CRI-compliant. So, instead of containerd, we could use any other container runtime. And there are quite a few to choose from. This way, system administrators can pick whatever container runtime works best for them instead of being forced to use containerd.

To recap, the CRI creates a standardized interface. kubelet does not have to worry about how to tell container runtimes to do something. It does this in a standardized way, always sending the same message if it wants to "start container X," no matter what container runtime is at the other end.

Furthermore, the container runtimes also get a standardized way to receive messages. They know that in a CRI format, they will always get a specific message telling them they need to "start container X" in a specific language. So, the developers of the runtime know what kind of messages to expect for their app.

The cool thing about CRI... Like the USB port, it allows developers to pick whatever internal logic they want for their container runtime. All that a kubelet does is ask for a runtime to start a container. But it does not tell it HOW to do it or what steps it should go through. The container runtime can do it in whatever way it thinks is best. And this allows for a lot of diversity.

Developers can optimize different container runtimes for different things. And Kubernetes administrators can pick the runtime that works best for their use case.

Looking to polish your orchestration skills? Check out the following Kubernetes Course from KodeKloud:

.png)

Conclusion

Hopefully, this will clear up any mystery as to why Docker was removed from Kubernetes. As we can see, this actually helps us run things more efficiently and also gives us the freedom to choose any container runtime we want. Things are much more flexible nowadays with Kubernetes.

If you're experienced with Kubernetes but feel like you could improve in some areas, check out this course that is designed to help you pass the CKA - Certified Kubernetes Administrator Exam.

More on Docker and Kubernetes:

Discussion