I am not able to use models permitted under Services on AWS AI Playground | KodeKloud

I am getting this error in my localhost terminal:

return new AuthenticationError(status, error, message, headers);

^

AuthenticationError: 401 User: arn:aws:iam::822233328238:user/kk_labs_user_504951 is not authorized to perform: bedrock-mantle:CreateInference on resource: arn:aws:bedrock-mantle:us-east-1:822233328238:project/default because no identity-based policy allows the bedrock-mantle:CreateInference action

at Function.generate (/Users/t25/Downloads/genai/node_modules/openai/src/core/error.ts:76:14)

at OpenAI.makeStatusError (/Users/t25/Downloads/genai/node_modules/openai/src/client.ts:494:28)

at OpenAI.makeRequest (/Users/t25/Downloads/genai/node_modules/openai/src/client.ts:745:24)

at process.processTicksAndRejections (node:internal/process/task_queues:105:5)

at async <anonymous> (/Users/taran/Downloads/genai/src/index.ts:11:18) {

status: 401,

headers: Headers {},

requestID: 'req_lskvufom64ftqfqvz7zef37mieeqgdxnuls3w77jtqm4k7xfitva',

error: {

code: 'access_denied',

message: 'User: arn:aws:iam::822233328238:user/kk_labs_user_504951 is not authorized to perform: bedrock-mantle:CreateInference on resource: arn:aws:bedrock-mantle:us-east-1:822233328238:project/default because no identity-based policy allows the bedrock-mantle:CreateInference action',

param: null,

type: 'permission_denied_error'

},

code: 'access_denied',

param: null,

type: 'permission_denied_error'

}

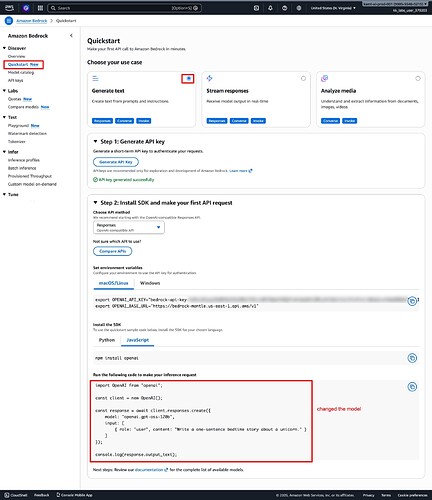

This was my index.ts file content:

I was just trying to see how llm responds

import dotenv from 'dotenv';

import OpenAI from 'openai';

dotenv.config();

const client = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

baseURL: process.env.OPENAI_BASE_URL,

});

const response = await client.chat.completions.create({

model: 'meta.llama3-2-3b-instruct-v1:0',

messages: [

{

role: 'user',

content:

'In a single sentence, why should someone choose KodeKloud over other platforms to learn DevOps?',

},

],

});

console.log(response.choices[0]?.message.content);

AWS AI Playground | KodeKloud mentions:

- Models Permitted in Bedrock:

- [Llama 3.2 1B Instruct]

- [Llama 3.2 3B Instruct]

- [Titan Embeddings G1 - Text]