I attached all command lines and outputs.

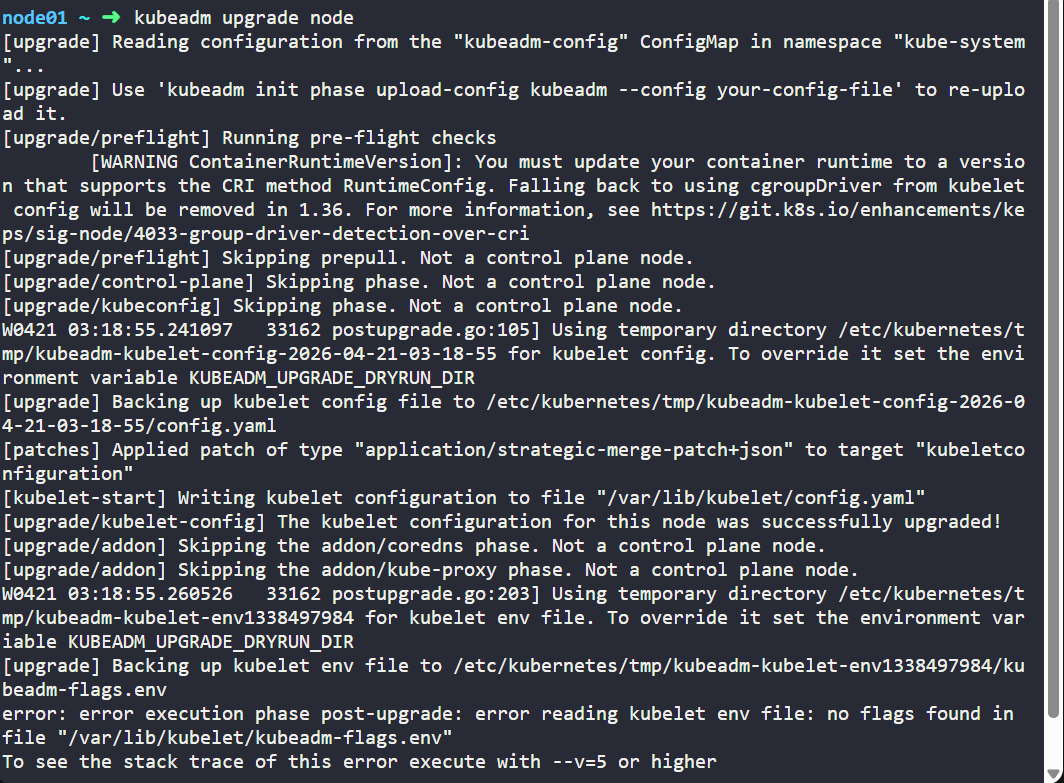

I did restart kubelet on node01 but it didn’t work at all.

Welcome to the KodeKloud Hands-On lab

__ ______ ____ ________ __ __ ____ __ ______

/ //_/ __ \/ __ \/ ____/ //_// / / __ \/ / / / __ \

/ ,< / / / / / / / __/ / ,< / / / / / / / / / / / /

/ /| / /_/ / /_/ / /___/ /| |/ /___/ /_/ / /_/ / /_/ /

/_/ |_\____/_____/_____/_/ |_/_____/\____/\____/_____/

All rights reserved

controlplane ~ ➜ k drain controlplane

node/controlplane cordoned

error: unable to drain node "controlplane" due to error: cannot delete DaemonSet-managed Pods (use --ignore-daemonsets to ignore): kube-flannel/kube-flannel-ds-hhlxk, kube-system/kube-proxy-vvvx2, continuing command...

There are pending nodes to be drained:

controlplane

cannot delete DaemonSet-managed Pods (use --ignore-daemonsets to ignore): kube-flannel/kube-flannel-ds-hhlxk, kube-system/kube-proxy-vvvx2

controlplane ~ ✖ k drain controlplane --ignore-daemonsets

node/controlplane already cordoned

Warning: ignoring DaemonSet-managed Pods: kube-flannel/kube-flannel-ds-hhlxk, kube-system/kube-proxy-vvvx2

evicting pod kube-system/coredns-6678bcd974-mzxjq

evicting pod default/blue-759779556-shf76

evicting pod kube-system/coredns-6678bcd974-hpw7g

evicting pod default/blue-759779556-fc6z4

pod/blue-759779556-shf76 evicted

pod/blue-759779556-fc6z4 evicted

pod/coredns-6678bcd974-mzxjq evicted

pod/coredns-6678bcd974-hpw7g evicted

node/controlplane drained

controlplane ~ ➜ vim /etc/apt/sources.list.d/kubernetes.list

controlplane ~ ➜ apt update

Hit:2 https://download.docker.com/linux/ubuntu jammy InRelease

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb InRelease [1,227 B]

Hit:3 http://archive.ubuntu.com/ubuntu jammy InRelease

Get:4 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb Packages [7,603 B]

Hit:5 http://security.ubuntu.com/ubuntu jammy-security InRelease

Hit:6 http://archive.ubuntu.com/ubuntu jammy-updates InRelease

Hit:7 http://archive.ubuntu.com/ubuntu jammy-backports InRelease

Fetched 8,830 B in 1s (12.9 kB/s)

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

89 packages can be upgraded. Run 'apt list --upgradable' to see them.

controlplane ~ ➜ apt-cache madison kubeadm

kubeadm | 1.35.4-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.3-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.2-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.1-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.0-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

controlplane ~ ➜ apt-get install kubeadm=1.35.0-1.1

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following packages will be upgraded:

kubeadm

1 upgraded, 0 newly installed, 0 to remove and 88 not upgraded.

Need to get 12.4 MB of archives.

After this operation, 1,659 kB disk space will be freed.

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb kubeadm 1.35.0-1.1 [12.4 MB]

Fetched 12.4 MB in 0s (30.5 MB/s)

debconf: delaying package configuration, since apt-utils is not installed

(Reading database ... 20567 files and directories currently installed.)

Preparing to unpack .../kubeadm_1.35.0-1.1_amd64.deb ...

Unpacking kubeadm (1.35.0-1.1) over (1.34.0-1.1) ...

Setting up kubeadm (1.35.0-1.1) ...

controlplane ~ ➜ kubeadm upgrade apply v1.35.0

[upgrade] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[upgrade] Use 'kubeadm init phase upload-config kubeadm --config your-config-file' to re-upload it.

[upgrade/preflight] Running preflight checks

[WARNING ContainerRuntimeVersion]: You must update your container runtime to a version that supports the CRI method RuntimeConfig. Falling back to using cgroupDriver from kubelet config will be removed in 1.36. For more information, see https://git.k8s.io/enhancements/keps/sig-node/4033-group-driver-detection-over-cri

[upgrade] Running cluster health checks

[upgrade/preflight] You have chosen to upgrade the cluster version to "v1.35.0"

[upgrade/versions] Cluster version: v1.34.0

[upgrade/versions] kubeadm version: v1.35.0

[upgrade] Are you sure you want to proceed? [y/N]: y

[upgrade/preflight] Pulling images required for setting up a Kubernetes cluster

[upgrade/preflight] This might take a minute or two, depending on the speed of your internet connection

[upgrade/preflight] You can also perform this action beforehand using 'kubeadm config images pull'

W0423 04:40:44.654582 18065 checks.go:906] detected that the sandbox image "registry.k8s.io/pause:3.6" of the container runtime is inconsistent with that used by kubeadm. It is recommended to use "registry.k8s.io/pause:3.10.1" as the CRI sandbox image.

[upgrade/control-plane] Upgrading your static Pod-hosted control plane to version "v1.35.0" (timeout: 5m0s)...

[upgrade/staticpods] Writing new Static Pod manifests to "/etc/kubernetes/tmp/kubeadm-upgraded-manifests1497172453"

[upgrade/staticpods] Preparing for "etcd" upgrade

[upgrade/staticpods] Renewing etcd-server certificate

[upgrade/staticpods] Renewing etcd-peer certificate

[upgrade/staticpods] Renewing etcd-healthcheck-client certificate

[upgrade/staticpods] Moving new manifest to "/etc/kubernetes/manifests/etcd.yaml" and backing up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2026-04-23-04-40-54/etcd.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=etcd

[upgrade/staticpods] Component "etcd" upgraded successfully!

[upgrade/etcd] Waiting for etcd to become available

[upgrade/staticpods] Preparing for "kube-apiserver" upgrade

[upgrade/staticpods] Renewing apiserver certificate

[upgrade/staticpods] Renewing apiserver-kubelet-client certificate

[upgrade/staticpods] Renewing front-proxy-client certificate

[upgrade/staticpods] Renewing apiserver-etcd-client certificate

[upgrade/staticpods] Moving new manifest to "/etc/kubernetes/manifests/kube-apiserver.yaml" and backing up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2026-04-23-04-40-54/kube-apiserver.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-apiserver

[upgrade/staticpods] Component "kube-apiserver" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-controller-manager" upgrade

[upgrade/staticpods] Renewing controller-manager.conf certificate

[upgrade/staticpods] Moving new manifest to "/etc/kubernetes/manifests/kube-controller-manager.yaml" and backing up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2026-04-23-04-40-54/kube-controller-manager.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-controller-manager

[upgrade/staticpods] Component "kube-controller-manager" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-scheduler" upgrade

[upgrade/staticpods] Renewing scheduler.conf certificate

[upgrade/staticpods] Moving new manifest to "/etc/kubernetes/manifests/kube-scheduler.yaml" and backing up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2026-04-23-04-40-54/kube-scheduler.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This can take up to 5m0s

[apiclient] Found 1 Pods for label selector component=kube-scheduler

[upgrade/staticpods] Component "kube-scheduler" upgraded successfully!

[upgrade/control-plane] The control plane instance for this node was successfully upgraded!

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

[upgrade/kubeconfig] The kubeconfig files for this node were successfully upgraded!

W0423 04:43:26.131685 18065 postupgrade.go:105] Using temporary directory /etc/kubernetes/tmp/kubeadm-kubelet-config-2026-04-23-04-43-26 for kubelet config. To override it set the environment variable KUBEADM_UPGRADE_DRYRUN_DIR

[upgrade] Backing up kubelet config file to /etc/kubernetes/tmp/kubeadm-kubelet-config-2026-04-23-04-43-26/config.yaml

[patches] Applied patch of type "application/strategic-merge-patch+json" to target "kubeletconfiguration"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade/kubelet-config] The kubelet configuration for this node was successfully upgraded!

[upgrade/bootstrap-token] Configuring bootstrap token and cluster-info RBAC rules

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

W0423 04:43:30.502914 18065 postupgrade.go:203] Using temporary directory /etc/kubernetes/tmp/kubeadm-kubelet-env3141699432 for kubelet env file. To override it set the environment variable KUBEADM_UPGRADE_DRYRUN_DIR

[upgrade] Backing up kubelet env file to /etc/kubernetes/tmp/kubeadm-kubelet-env3141699432/kubeadm-flags.env

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[upgrade] SUCCESS! A control plane node of your cluster was upgraded to "v1.35.0".

[upgrade] Now please proceed with upgrading the rest of the nodes by following the right order.

controlplane ~ ➜

controlplane ~ ➜ apt-get install kubelet=1.35.0-1.1

-bash: controlplane: command not found

controlplane ~ ✖ apt-get install kubelet=1.35.0-1.1

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following packages were automatically installed and are no longer required:

conntrack ethtool

Use 'apt autoremove' to remove them.

The following packages will be upgraded:

kubelet

1 upgraded, 0 newly installed, 0 to remove and 88 not upgraded.

Need to get 12.9 MB of archives.

After this operation, 1,085 kB disk space will be freed.

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb kubelet 1.35.0-1.1 [12.9 MB]

Fetched 12.9 MB in 0s (39.0 MB/s)

debconf: delaying package configuration, since apt-utils is not installed

(Reading database ... 20567 files and directories currently installed.)

Preparing to unpack .../kubelet_1.35.0-1.1_amd64.deb ...

Unpacking kubelet (1.35.0-1.1) over (1.34.0-1.1) ...

Setting up kubelet (1.35.0-1.1) ...

controlplane ~ ➜ systemctl daemon-reload

systemctl restart kubelet

controlplane ~ ➜ k version

Client Version: v1.34.1

Kustomize Version: v5.7.1

Error from server (Forbidden): unknown

controlplane ~ ✖ k version

Client Version: v1.34.1

Kustomize Version: v5.7.1

Server Version: v1.35.0

controlplane ~ ➜ k unconrdon conrolplane

error: unknown command "unconrdon" for "kubectl"

Did you mean this?

uncordon

controlplane ~ ✖ k uncordon conrolplane

Error from server (NotFound): nodes "conrolplane" not found

controlplane ~ ✖ k uncordon controlplane

node/controlplane uncordoned

controlplane ~ ➜ k drain node01 --ignore-daemonsets

node/node01 cordoned

Warning: ignoring DaemonSet-managed Pods: kube-flannel/kube-flannel-ds-nwvxh, kube-system/kube-proxy-77kp2

evicting pod kube-system/coredns-7d764666f9-vqq96

evicting pod default/blue-759779556-ctj6t

evicting pod default/blue-759779556-57pm6

evicting pod default/blue-759779556-c85cn

evicting pod kube-system/coredns-7d764666f9-vl4bh

evicting pod default/blue-759779556-g89wm

evicting pod default/blue-759779556-q82k9

pod/blue-759779556-g89wm evicted

pod/blue-759779556-57pm6 evicted

pod/blue-759779556-ctj6t evicted

pod/blue-759779556-q82k9 evicted

pod/blue-759779556-c85cn evicted

pod/coredns-7d764666f9-vqq96 evicted

pod/coredns-7d764666f9-vl4bh evicted

node/node01 drained

controlplane ~ ➜ ssh node01

Welcome to Ubuntu 22.04.5 LTS (GNU/Linux 6.8.0-1047-gcp x86_64)

* Documentation: https://help.ubuntu.com

* Management: https://landscape.canonical.com

* Support: https://ubuntu.com/pro

This system has been minimized by removing packages and content that are

not required on a system that users do not log into.

To restore this content, you can run the 'unminimize' command.

node01 ~ ➜ vim /etc/apt/sources.list.d/kubernetes.list

node01 ~ ➜ apt update

apt-cache madison kubeadm

Get:2 https://download.docker.com/linux/ubuntu jammy InRelease [48.5 kB]

Get:3 http://security.ubuntu.com/ubuntu jammy-security InRelease [129 kB]

Get:4 https://download.docker.com/linux/ubuntu jammy/stable amd64 Packages [91.3 kB]

Hit:5 http://archive.ubuntu.com/ubuntu jammy InRelease

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb InRelease [1,227 B]

Get:6 http://archive.ubuntu.com/ubuntu jammy-updates InRelease [128 kB]

Get:7 http://security.ubuntu.com/ubuntu jammy-security/main amd64 Packages [3,860 kB]

Get:8 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb Packages [7,603 B]

Get:9 http://security.ubuntu.com/ubuntu jammy-security/restricted amd64 Packages [6,862 kB]

Get:10 http://security.ubuntu.com/ubuntu jammy-security/universe amd64 Packages [1,292 kB]

Get:11 http://security.ubuntu.com/ubuntu jammy-security/multiverse amd64 Packages [61.6 kB]

Get:12 http://archive.ubuntu.com/ubuntu jammy-backports InRelease [127 kB]

Get:13 http://archive.ubuntu.com/ubuntu jammy-updates/universe amd64 Packages [1,601 kB]

Get:14 http://archive.ubuntu.com/ubuntu jammy-updates/multiverse amd64 Packages [69.9 kB]

Get:15 http://archive.ubuntu.com/ubuntu jammy-updates/restricted amd64 Packages [7,066 kB]

Get:16 http://archive.ubuntu.com/ubuntu jammy-updates/main amd64 Packages [4,188 kB]

Get:17 http://archive.ubuntu.com/ubuntu jammy-backports/universe amd64 Packages [35.7 kB]

Get:18 http://archive.ubuntu.com/ubuntu jammy-backports/main amd64 Packages [82.7 kB]

Fetched 25.7 MB in 2s (11.5 MB/s)

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

89 packages can be upgraded. Run 'apt list --upgradable' to see them.

kubeadm | 1.35.4-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.3-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.2-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.1-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

kubeadm | 1.35.0-1.1 | https://pkgs.k8s.io/core:/stable:/v1.35/deb Packages

node01 ~ ➜ apt-get install kubeadm=1.35.0-1.1

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following held packages will be changed:

kubeadm

The following packages will be upgraded:

kubeadm

1 upgraded, 0 newly installed, 0 to remove and 88 not upgraded.

Need to get 12.4 MB of archives.

After this operation, 1,659 kB disk space will be freed.

Do you want to continue? [Y/n] Y

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb kubeadm 1.35.0-1.1 [12.4 MB]

Fetched 12.4 MB in 0s (44.8 MB/s)

debconf: delaying package configuration, since apt-utils is not installed

(Reading database ... 17482 files and directories currently installed.)

Preparing to unpack .../kubeadm_1.35.0-1.1_amd64.deb ...

Unpacking kubeadm (1.35.0-1.1) over (1.34.0-1.1) ...

Setting up kubeadm (1.35.0-1.1) ...

node01 ~ ➜ kubeadm upgrade node

[upgrade] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[upgrade] Use 'kubeadm init phase upload-config kubeadm --config your-config-file' to re-upload it.

[upgrade/preflight] Running pre-flight checks

[WARNING ContainerRuntimeVersion]: You must update your container runtime to a version that supports the CRI method RuntimeConfig. Falling back to using cgroupDriver from kubelet config will be removed in 1.36. For more information, see https://git.k8s.io/enhancements/keps/sig-node/4033-group-driver-detection-over-cri

[upgrade/preflight] Skipping prepull. Not a control plane node.

[upgrade/control-plane] Skipping phase. Not a control plane node.

[upgrade/kubeconfig] Skipping phase. Not a control plane node.

W0423 04:56:33.536014 40165 postupgrade.go:105] Using temporary directory /etc/kubernetes/tmp/kubeadm-kubelet-config-2026-04-23-04-56-33 for kubelet config. To override it set the environment variable KUBEADM_UPGRADE_DRYRUN_DIR

[upgrade] Backing up kubelet config file to /etc/kubernetes/tmp/kubeadm-kubelet-config-2026-04-23-04-56-33/config.yaml

[patches] Applied patch of type "application/strategic-merge-patch+json" to target "kubeletconfiguration"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade/kubelet-config] The kubelet configuration for this node was successfully upgraded!

[upgrade/addon] Skipping the addon/coredns phase. Not a control plane node.

[upgrade/addon] Skipping the addon/kube-proxy phase. Not a control plane node.

W0423 04:56:33.553174 40165 postupgrade.go:203] Using temporary directory /etc/kubernetes/tmp/kubeadm-kubelet-env839360761 for kubelet env file. To override it set the environment variable KUBEADM_UPGRADE_DRYRUN_DIR

[upgrade] Backing up kubelet env file to /etc/kubernetes/tmp/kubeadm-kubelet-env839360761/kubeadm-flags.env

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

node01 ~ ➜ k get node

E0423 04:56:37.415693 40257 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:56:37.416846 40257 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:56:37.417829 40257 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:56:37.419900 40257 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:56:37.420857 40257 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

Error from server (NotFound): the server could not find the requested resource

node01 ~ ✖ apt-get install kubelet=1.35.0-1.1

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

The following packages were automatically installed and are no longer required:

conntrack ethtool

Use 'apt autoremove' to remove them.

The following held packages will be changed:

kubelet

The following packages will be upgraded:

kubelet

1 upgraded, 0 newly installed, 0 to remove and 88 not upgraded.

Need to get 12.9 MB of archives.

After this operation, 1,085 kB disk space will be freed.

Do you want to continue? [Y/n] Y

Get:1 https://prod-cdn.packages.k8s.io/repositories/isv:/kubernetes:/core:/stable:/v1.35/deb kubelet 1.35.0-1.1 [12.9 MB]

Fetched 12.9 MB in 0s (48.0 MB/s)

debconf: delaying package configuration, since apt-utils is not installed

(Reading database ... 17482 files and directories currently installed.)

Preparing to unpack .../kubelet_1.35.0-1.1_amd64.deb ...

Unpacking kubelet (1.35.0-1.1) over (1.34.0-1.1) ...

Setting up kubelet (1.35.0-1.1) ...

node01 ~ ➜ systemctl daemon-reload

systemctl restart kubelet

node01 ~ ✖ systemctl restart kubelet

node01 ~ ✖ k get pod

E0423 04:59:16.440531 42247 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:16.441742 42247 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:16.442741 42247 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:16.443713 42247 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:16.445924 42247 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

Error from server (NotFound): the server could not find the requested resource

node01 ~ ✖ k get node

E0423 04:59:20.351906 42305 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:20.353077 42305 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:20.354211 42305 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:20.355321 42305 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

E0423 04:59:20.356283 42305 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: the server could not find the requested resource"

Error from server (NotFound): the server could not find the requested resource