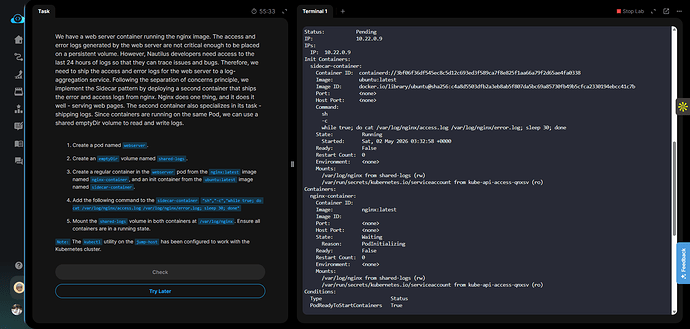

Day 55 task of 100 days of DevOps practice labs. I have followed the instructions exactly as provided, but the lab is currently impossible to pass due to contradictory instructions and a bug in the validation script.

The Issue:

The instructions explicitly ask to deploy the sidecar-container as an init container with an infinite loop command: while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep 30; done.

Because an initContainer runs sequentially to completion, placing an infinite loop inside it permanently blocks the Pod from reaching the Running state. It stays perpetually in Init:0/1. However, the prompt asks to “Ensure all containers are in a running state,” which is logically impossible.

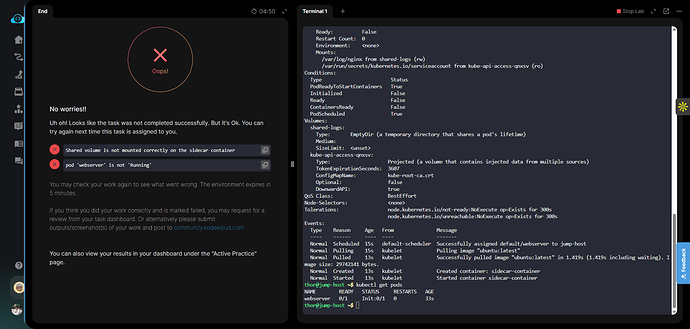

The grader script then gives two errors:

“pod ‘webserver’ is not ‘Running’” (Caused by the infinite loop in the init block).

“Shared volume is not mounted correctly on the sidecar container” (This is a bug in the validation script. It seems to be checking the spec.containers array for the volume mount instead of the spec.initContainers array, even though the prompt explicitly asked for an init container).

I have attached the full live state of the cluster below, proving that the volume is perfectly mounted under initContainers and the exact command was used.

Could you please manually mark my task as ‘Success’ so I can unlock Day 56, and kindly forward this to the content team to fix the validation script and prompt?

Evidence Log (kubectl get pod -o yaml & describe): I will attach as text file.

My YAML code for the pod.yaml :

apiVersion: v1

kind: Pod

metadata:

name: webserver

spec:

volumes:

- name: shared-logs

emptyDir: {}

containers:

- name: nginx-container

image: nginx:latest

volumeMounts:- name: shared-logs

mountPath: /var/log/nginx

- name: shared-logs

initContainers:

- name: sidecar-container

image: ubuntu:latest

volumeMounts:- name: shared-logs

mountPath: /var/log/nginx

command: [“sh”, “-c”, “while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep 30; done”]

- name: shared-logs

=== 1. ORIGINAL POD YAML ===

apiVersion: v1

kind: Pod

metadata:

name: webserver

spec:

volumes:

-

name: shared-logs

emptyDir: {}

containers:

-

name: nginx-container

image: nginx:latest

volumeMounts:

-

name: shared-logs

mountPath: /var/log/nginx

-

initContainers:

-

name: sidecar-container

image: ubuntu:latest

volumeMounts:

-

name: shared-logs

mountPath: /var/log/nginx

command: [“sh”, “-c”, “while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep 30; done”]

-

=== 2. LIVE KUBERNETES STATE ===

apiVersion: v1

kind: Pod

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Pod","metadata":{"annotations":{},"name":"webserver","namespace":"default"},"spec":{"containers":[{"image":"nginx:latest","name":"nginx-container","volumeMounts":[{"mountPath":"/var/log/nginx","name":"shared-logs"}]}],"initContainers":[{"command":["sh","-c","while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep 30; done"],"image":"ubuntu:latest","name":"sidecar-container","volumeMounts":[{"mountPath":"/var/log/nginx","name":"shared-logs"}]}],"volumes":[{"emptyDir":{},"name":"shared-logs"}]}}

creationTimestamp: “2026-05-02T03:32:56Z”

generation: 1

name: webserver

namespace: default

resourceVersion: “1794”

uid: accccf14-b7f5-4556-a0df-73be604a3c5e

spec:

containers:

-

image: nginx:latest

imagePullPolicy: Always

name: nginx-container

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

-

mountPath: /var/log/nginx

name: shared-logs

-

mountPath: /var/run/secrets/kubernetes.io/serviceaccount

name: kube-api-access-qnxsv

readOnly: true

-

dnsPolicy: ClusterFirst

enableServiceLinks: true

initContainers:

-

command:

-

sh

-

-c

-

while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep

30; done

image: ubuntu:latest

imagePullPolicy: Always

name: sidecar-container

resources: {}

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

-

mountPath: /var/log/nginx

name: shared-logs

-

mountPath: /var/run/secrets/kubernetes.io/serviceaccount

name: kube-api-access-qnxsv

readOnly: true

-

nodeName: jump-host

preemptionPolicy: PreemptLowerPriority

priority: 0

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

serviceAccount: default

serviceAccountName: default

terminationGracePeriodSeconds: 30

tolerations:

-

effect: NoExecute

key: node.kubernetes.io/not-ready

operator: Exists

tolerationSeconds: 300

-

effect: NoExecute

key: node.kubernetes.io/unreachable

operator: Exists

tolerationSeconds: 300

volumes:

-

emptyDir: {}

name: shared-logs

-

name: kube-api-access-qnxsv

projected:

defaultMode: 420

sources:

-

serviceAccountToken:

expirationSeconds: 3607

path: token

-

configMap:

items:

-

key: ca.crt

path: ca.crt

name: kube-root-ca.crt

-

-

downwardAPI:

items:

-

fieldRef:

apiVersion: v1

fieldPath: metadata.namespace

path: namespace

-

-

status:

conditions:

-

lastProbeTime: null

lastTransitionTime: “2026-05-02T03:32:58Z”

observedGeneration: 1

status: “True”

type: PodReadyToStartContainers

-

lastProbeTime: null

lastTransitionTime: “2026-05-02T03:32:56Z”

message: ‘containers with incomplete status: [sidecar-container]’

observedGeneration: 1

reason: ContainersNotInitialized

status: “False”

type: Initialized

-

lastProbeTime: null

lastTransitionTime: “2026-05-02T03:32:56Z”

message: ‘containers with unready status: [nginx-container]’

observedGeneration: 1

reason: ContainersNotReady

status: “False”

type: Ready

-

lastProbeTime: null

lastTransitionTime: “2026-05-02T03:32:56Z”

message: ‘containers with unready status: [nginx-container]’

observedGeneration: 1

reason: ContainersNotReady

status: “False”

type: ContainersReady

-

lastProbeTime: null

lastTransitionTime: “2026-05-02T03:32:56Z”

observedGeneration: 1

status: “True”

type: PodScheduled

containerStatuses:

-

image: nginx:latest

imageID: “”

lastState: {}

name: nginx-container

ready: false

restartCount: 0

started: false

state:

waiting:

reason: PodInitializingvolumeMounts:

-

mountPath: /var/log/nginx

name: shared-logs

-

mountPath: /var/run/secrets/kubernetes.io/serviceaccount

name: kube-api-access-qnxsv

readOnly: true

recursiveReadOnly: Disabled

-

hostIP: 10.244.73.129

hostIPs:

- ip: 10.244.73.129

initContainerStatuses:

-

containerID: containerd://3bf06f36df545ec8c5d12c693ed3f589ca7f8e825f1aa66a79f2d65ae4fa0338

image: Docker Hub Container Image Library | App Containerization

imageID: Docker Hub Container Image Library | App Containerization

lastState: {}

name: sidecar-container

ready: false

resources: {}

restartCount: 0

started: true

state:

running:

startedAt: "2026-05-02T03:32:58Z"volumeMounts:

-

mountPath: /var/log/nginx

name: shared-logs

-

mountPath: /var/run/secrets/kubernetes.io/serviceaccount

name: kube-api-access-qnxsv

readOnly: true

recursiveReadOnly: Disabled

-

observedGeneration: 1

phase: Pending

podIP: 10.22.0.9

podIPs:

- ip: 10.22.0.9

qosClass: BestEffort

startTime: “2026-05-02T03:32:56Z”

=== 3. POD EVENTS & STATUS ===

Name: webserver

Namespace: default

Priority: 0

Service Account: default

Node: jump-host/10.244.73.129

Start Time: Sat, 02 May 2026 03:32:56 +0000

Labels:

Annotations:

Status: Pending

IP: 10.22.0.9

IPs:

IP: 10.22.0.9

Init Containers:

sidecar-container:

Container ID: containerd://3bf06f36df545ec8c5d12c693ed3f589ca7f8e825f1aa66a79f2d65ae4fa0338

Image: ubuntu:latest

Image ID: docker.io/library/ubuntu@sha256:c4a8d5503dfb2a3eb8ab5f807da5bc69a85730fb49b5cfca2330194ebcc41c7b

Port: <none>

Host Port: <none>

Command:

sh

-c

while true; do cat /var/log/nginx/access.log /var/log/nginx/error.log; sleep 30; done

State: Running

Started: Sat, 02 May 2026 03:32:58 +0000

Ready: False

Restart Count: 0

Environment: <none>

Mounts:

/var/log/nginx from shared-logs (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-qnxsv (ro)

Containers:

nginx-container:

Container ID:

Image: nginx:latest

Image ID:

Port: <none>

Host Port: <none>

State: Waiting

Reason: PodInitializing

Ready: False

Restart Count: 0

Environment: <none>

Mounts:

/var/log/nginx from shared-logs (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-qnxsv (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized False

Ready False

ContainersReady False

PodScheduled True

Volumes:

shared-logs:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

kube-api-access-qnxsv:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors:

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Normal Scheduled 4m57s default-scheduler Successfully assigned default/webserver to jump-host

Normal Pulling 4m57s kubelet Pulling image “ubuntu:latest”

Normal Pulled 4m55s kubelet Successfully pulled image “ubuntu:latest” in 1.419s (1.419s including waiting). Image size: 29742141 bytes.

Normal Created 4m55s kubelet Created container: sidecar-container

Normal Started 4m55s kubelet Started container sidecar-container

thor@jump-host ~$ ls

evidence.txt pod.yaml

thor@jump-host ~$

I will attach all the evidence below. I hope you respond positively to this issue.